In January, 2021, the OpenAI consortium — founded by Elon Musk and financially backed by Microsoft — unveiled its most ambitious project to date, the DALL-E machine learning system. This ingenious multimodal AI was capable of generating images (albeit, rather cartoonish ones) based on the attributes described by a user — think “a cat made of sushi” or “an x-ray of a Capybara sitting in a forest.” On Wednesday, the consortium unveiled DALL-E’s next iteration which boasts higher resolution and lower latency than the original.

OpenAI

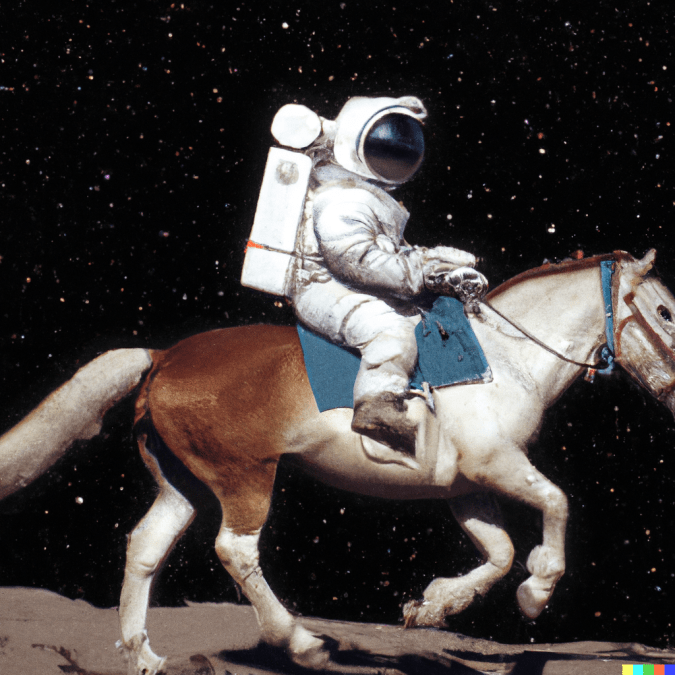

The first DALL-E (a portmanteau of “Dali,” as in the artist, and “WALL-E,” as in the animated Disney character) could generate images as well as combine multiple images into a collage, provide varying angles of perspective, and even infer elements of an image — such as shadowing effects — from the written description.

“Unlike a 3D rendering engine, whose inputs must be specified unambiguously and in complete detail, DALL·E is often able to ‘fill in the blanks’ when the caption implies that the image must contain a certain detail that is not explicitly stated,” the OpenAI team wrote in 2021.

OpenAI

DALL-E was never intended to be a commercial product and was therefore somewhat limited in its abilities given the OpenAI team’s focus on it as a research tool, it’s also been intentionally capped to avoid a Tay-esque situation or the system being leveraged to generate misinformation. Its sequel has been similarly sheltered with potentially objectionable images preemptively removed from its training data and a watermark indicating that its an AI-generated image automatically applied. Additionally, the system actively prevents users from creating pictures based on specific names. Sorry, folks wondering what “Christopher Walken eating a churro in the Sistine Chapel” would look like.

DALL-E 2, which utilizes OpenAI’s CLIP image recognition system, builds on those image generation capabilities. Users can now select and edit specific areas of existing images, add or remove elements along with their shadows, mash-up two images into a single collage, and generate variations of an existing image. What’s more, the output images are 1024px squares, up from the 256px avatars the original version generated. OpenAI’s CLIP was designed to look at a given image and summarize its contents in a way humans can understand. The consortium reversed that process, building an image from its summary, in its work with the new system.

OpenAI

“DALL-E 1 just took our GPT-3 approach from language and applied it to produce an image: we compressed images into a series of words and we just learned to predict what comes next,” OpenAI research scientist Prafulla Dhariwal told Verge.

Unlike the first, which anybody could play with on the OpenAI website, this new version is currently only available for testing by vetted partners who themselves are limited in what they can upload or generate with it. Only family-friendly sources can be utilized and anything involving nudity, obscenity, extremist ideology or “major conspiracies or events related to major ongoing geopolitical events” are right out. Again, sorry to the folks hoping to generate “Donald Trump riding a naked, COVID-stricken Nancy Pelosi like a horse through the US Senate on January 6th while doing a Nazi salute.”

OpenAI

The current crop of testers are also banned from exporting their generated works to a third-party platform though OpenAI is considering adding DALL-E 2’s abilities to its API in the future. If you want to try DALL-E 2 for yourself, you can sign up for the waitlist on OpenAI’s website.

All products recommended by Engadget are selected by our editorial team, independent of our parent company. Some of our stories include affiliate links. If you buy something through one of these links, we may earn an affiliate commission.